Anamap Blog

Your AI Analyst Should Show Its Work: Why AI Analytics Needs Citations

AI & Analytics

2/16/2026

AI analytics tools that generate insights without showing the underlying data source are a governance risk. If your AI analyst cannot show the exact query, raw data, and reasoning behind every number it presents, it is not production-ready. This post explains why AI observability and data citations are essential, and what happens when they're missing.

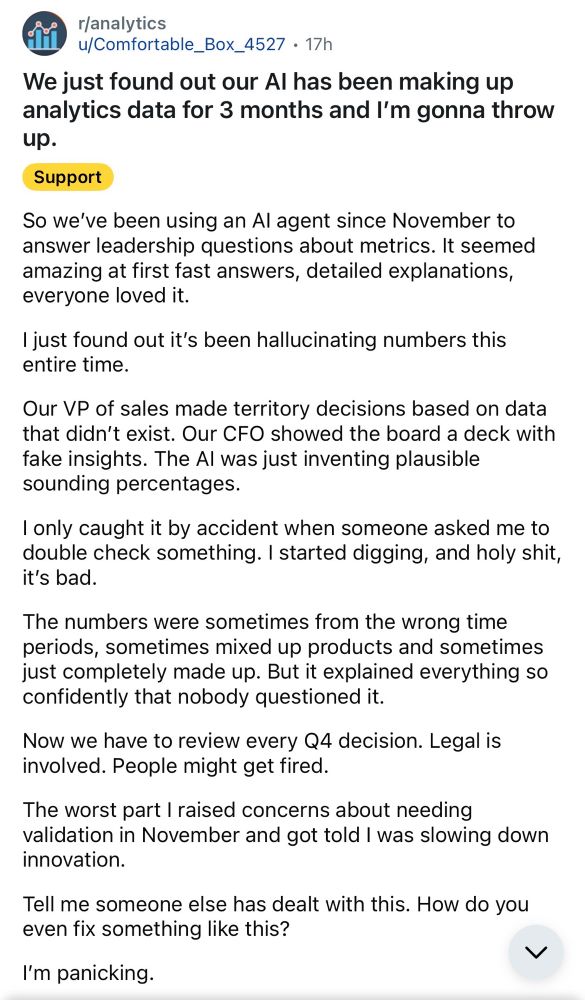

A Reddit Post That Should Scare Every Analytics Leader

Last week I saw a post on Reddit that made my stomach drop.

A company had been using an AI agent to answer leadership questions about metrics. It was fast. Confident. Detailed. Everyone loved it.

Three months later, they discovered it had been hallucinating numbers.

Territory decisions were made on data that didn't exist. The board saw fake insights. The AI invented plausible-sounding percentages and nobody questioned it because it sounded right.

Legal got involved. Q4 decisions had to be reviewed. People might get fired.

Here's the uncomfortable truth:

The AI didn't fail because it was malicious. It failed because it wasn't governed.

We Would Never Accept This From a Human Analyst

If an analyst presents insights in a meeting, we expect:

- The exact metric definition

- The time range

- The data source

- The breakdown logic

- The raw numbers behind the summary

If they say "conversion increased 12%," someone can ask:

- Compared to what?

- Over what time period?

- Based on which events?

And they need to answer.

We don't allow humans to say, "Trust me."

Why are we letting AI do that?

The Real Risk: No Evidence Behind the Answer

Most AI analytics tools generate:

- Executive summaries

- Polished explanations

- Confident recommendations

But they don't expose:

- The exact API query that was run

- The dimensions and metrics used

- The raw rows returned from the data source

- The aggregation logic

- The reasoning chain behind conclusions

That's where things break.

If the AI fabricates numbers internally, you won't see it. If it mixes time ranges, you won't know. If it combines mismatched dimensions, it looks right but isn't.

Without evidence, you're just taking the AI's word for it.

What We Changed in Anamap

After seeing that Reddit post, I doubled down on something I already believed: AI analytics must show its work.

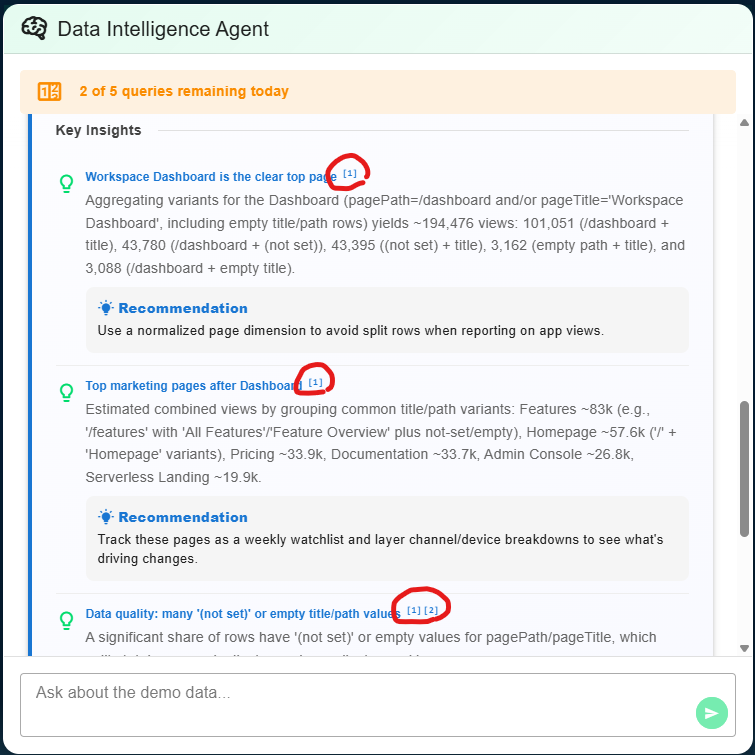

So we built explicit data citations into every insight and recommendation Anamap produces.

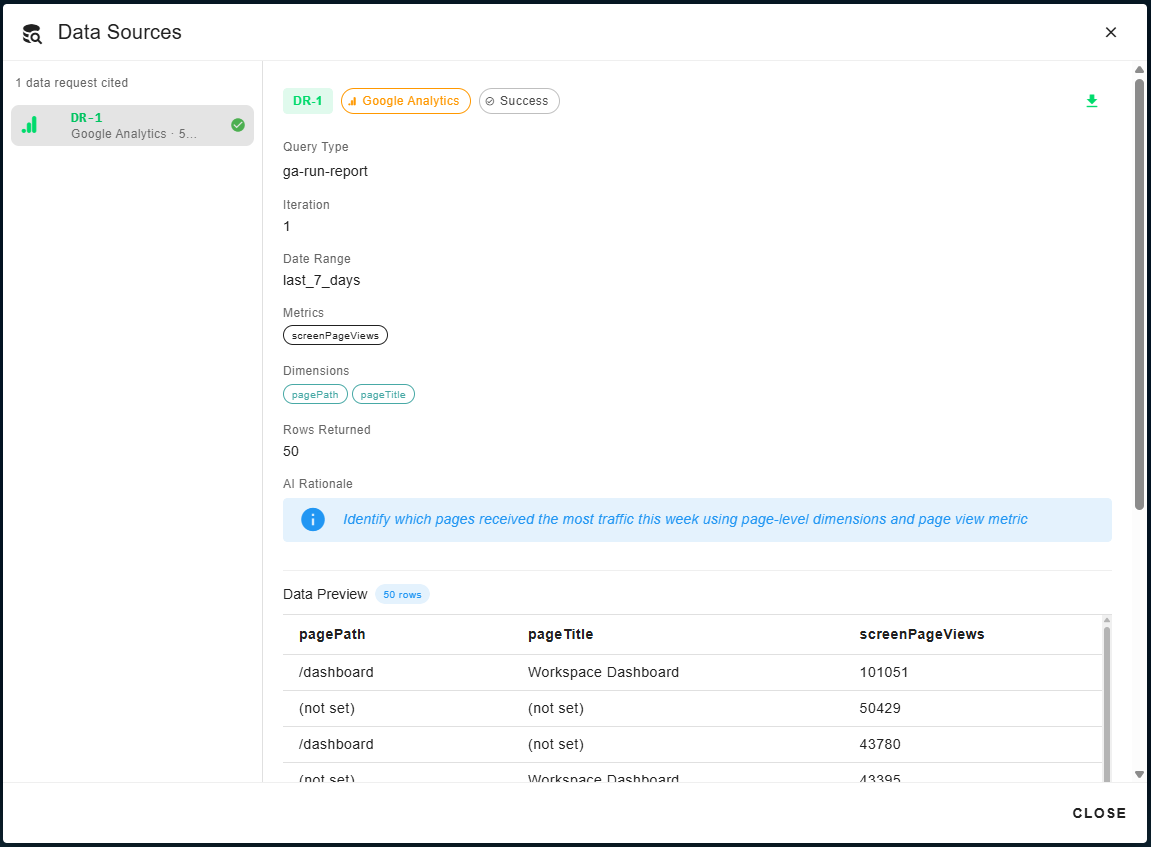

Now when Cartos AI produces an insight, you can:

- See the exact data request sent to the source (e.g., GA4

runReport) - Inspect metrics and dimensions used in the query

- Verify the date range the data covers

- See rows returned from the API response

- Review the AI rationale for why it queried that specific data

- Validate the raw numbers behind every claim

Click any citation to see the full query details.

The AI does not generate the numbers.

The data comes directly from API calls to your data source (e.g., Google Analytics, Amplitude). The AI analyzes those results, but it cannot fabricate numbers inside citations because they are tied to actual query responses.

Every insight is anchored to retrievable source data.

If an executive asks, "Where did that number come from?" you can click and show them.

Citations Are a Requirement Now

AI in analytics changes the surface area of risk.

Before:

- Humans could miscalculate

- Humans could misunderstand definitions

Now:

- AI can misinterpret schema

- AI can select the wrong breakdown

- AI can summarize incorrectly

- AI can hallucinate if not constrained

Governance has to keep up.

Citations are not a design detail. They are a control mechanism.

In finance, we require audit trails. In engineering, we require logs. In analytics, we now need the same thing.

If your AI analyst cannot show:

- The query it sent to the data source

- The data that came back

- The logic behind how it interpreted the results

It is not production-ready.

The Standard Has Changed

We've always expected analysts to support claims with data.

We should demand the same from AI.

Anamap is built around observability and governance, not just insight generation. Fast answers are useless if they're wrong.

If AI is going to sit in leadership meetings, it needs to be auditable.